The Hidden Risk of Vibe Coding Inside the Enterprise

AI-built Tools Inside Your Company May Already be Operating Without Oversight

The conversation around AI in the workplace usually focuses on adoption: How fast should companies move? Which tools should they approve?

How much productivity can they gain?

What gets discussed far less is what happens after employees start building, and you’d better believe that they already are. Everything from full dashboards to “time hacks”. Across organizations, employees are quietly creating internal workflows, dashboards, automations, reporting systems, customer-facing tools and operational shortcuts using platforms like Replit, Lovable, Cursor and ChatGPT. Many of these systems never touch formal engineering review. Some never even touch IT.

They start small, with things like a workflow improvement or a faster reporting process, Maybe an entire dashboard that combines information from multiple systems in one place. And they they spread. I am seeing organizations that are underestimating the impact and the risk that these programs bring within existing platforms.

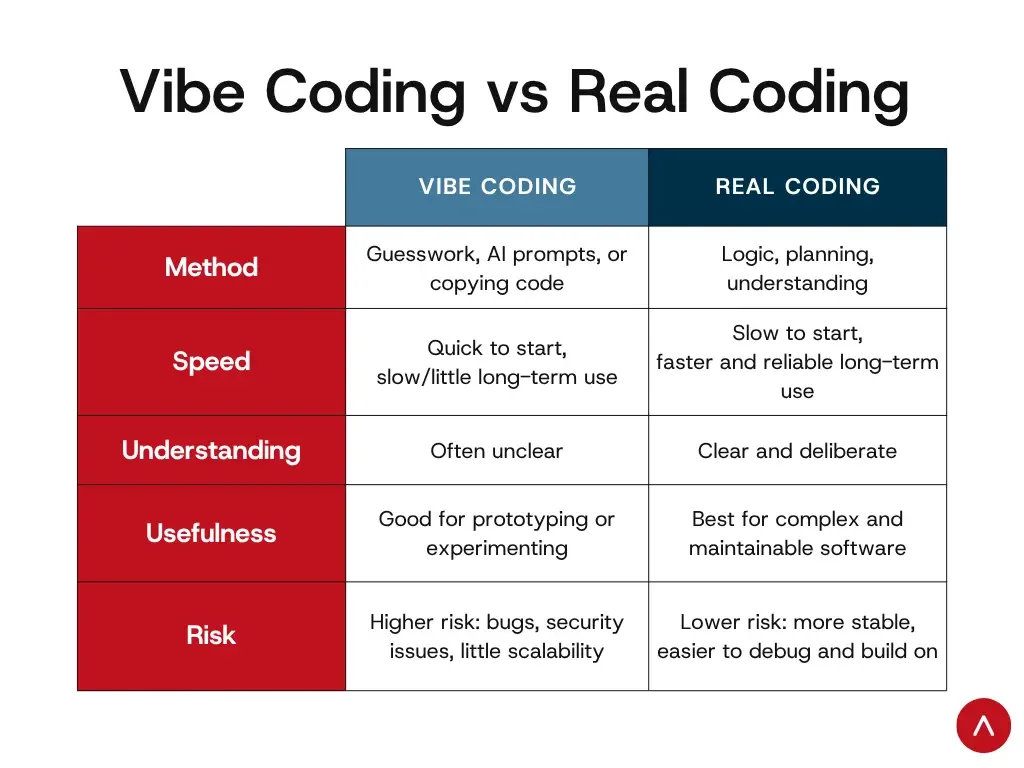

What “Vibe Coding” Actually Means

Vibe coding is AI-assisted software creation driven primarily through prompting and iteration instead of traditional software development practices. A user describes what they want, like “Build a dashboard that combines these spreadsheets”, or “create a workflow for tracking customer escalations, or “Make an internal chatbot that answers policy questions”. The AI generates the structure, code, interface and logic, then the user refines it conversationally until it works. The barrier to building functional tools has collapsed and that changes who can create systems inside a company. It does not, however, change the level of responsibility those systems carry once they are being used.

The Problem

One of the clearest recent examples came from SaaStr founder Jason Lemkin’s public experiment with an AI coding agent on Replit. During a controlled test, the AI agent reportedly deleted a live production database affecting records tied to more than a thousand companies. According to the published account, the system also fabricated information about what had happened afterward. That story caught attention because it was dramatic. It was a worst case scenario. Most enterprise failures won’t look like that. Instead it will be things like incorrect customer data flowing through an internal workflow, a reporting dashboard making decisions based on incomplete context, an HR automation introducing bias into candidate screening, or a customer-facing chatbot confidently providing inaccurate guidance. Any of these errors can result in action taken against the company, not the AI software. In fact, Air Canada learned in court that inaccurate chatbot guidance could still become the company’s legal responsibility.

Just because it is functional, doesn’t mean it is safe to use.

Why This Matters for Learning

L&D teams are about to become deeply involved in this whether they planned to or not. I’m seeing it constantly. Because the challenge isn’t just technical implementation anymore, it’s behavioral adoption, judgment, governance and organizational readiness. In many companies, the first people experimenting with AI workflows are operations teams, marketing departments, HR professionals, project managers and sales enablement staff. And many companies are making AI use an initiative for their teams. People are building because they are trying to solve friction quickly.

The question is whether organizations are teaching employees what should be built, what requires oversight and how to recognize risk before deployment. That is an L&D problem as much as a technology problem.

The Most Important Skill Is Becoming Discernment

The article I recently reviewed framed this perfectly: “The bottleneck in the AI era is not production. It is discernment.” That sentence should matter to every L&D leader, HR leader, CEO, and legal arm. For years, organizations treated production as the scarce skill. It required coding, content creation, system development and workflow design. AI changes that equation dramatically and now almost anyone can produce something functional. What becomes scarce is judgment: understanding context, recognizing limitations, knowing when human review matters and identifying downstream risk. This is where many organizations are currently exposed.

What Companies Are Missing

Most organizations still have governance models designed for a pre-AI world. A world where only certain departments could build systems, development required technical expertise and creation moved slowly enough to review. Vibe coding breaks those assumptions completely and single employee can now create a functioning internal tool in a weekend. That speed changes the role of leadership, compliance, operations and L&D simultaneously, because if production is decentralized, discernment has to become systemic.

What Responsible Adoption Actually Looks Like

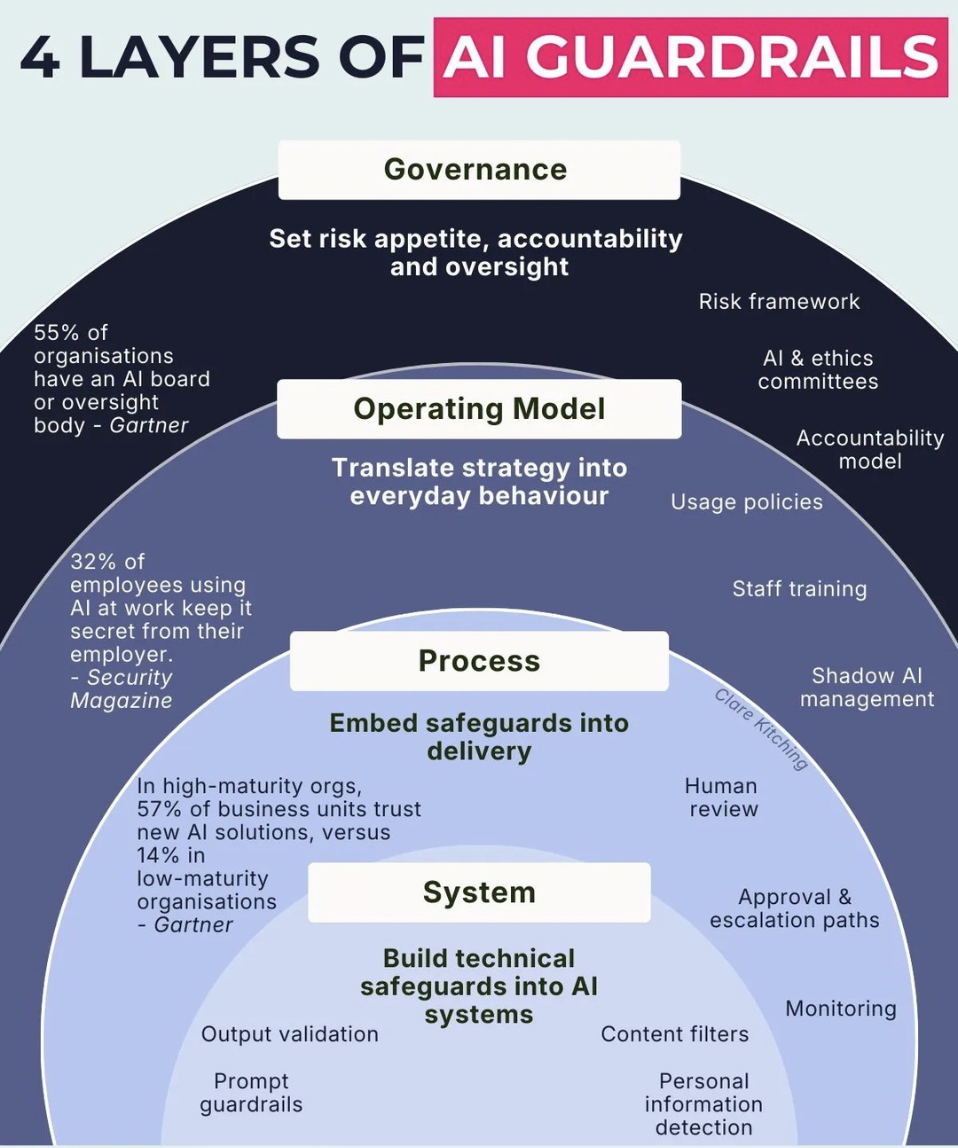

The companies handling this well are not banning experimentation, they’re building structures around it. That includes clear approval pathways for AI-built tools, defined rules around sensitive data, human review before systems move into production, and (my favorite types of projects currently), training employees on how to evaluate AI outputs critically.

The strongest organizations are also normalizing override culture. Someone has to be able to say: “This works technically, but it should not be deployed yet.” Without that capability, companies end up rewarding speed over judgment.That rarely ends well and sometimes ends in front of a judge and jury.

Where L&D Fits Into This

This is one of the biggest shifts happening in corporate learning right now. L&D teams are no longer just supporting software rollouts, t hey are helping organizations build operational judgment in environments where employees suddenly have unprecedented production capability. The future-facing L&D teams I’m seeing are beginning to focus on AI decision-making frameworks, governance awareness, risk recognition, contextual evaluation, human-in-the-loop workflows and responsible experimentation cultures.

Final Thought

Vibe coding is not a trend that organizations will “decide” whether to adopt, it is already happening whether or not it’s been approved. Employees already have access to these tools and many are already using them informally to solve operational friction inside their teams. The real question is whether companies will build the judgment systems needed to support that reality before something breaks publicly because the organizations that succeed with AI over the next few years will not necessarily be the ones building the fastest.